Time Series Analysis: Advanced A/B Testing Methods

In the rapidly evolving landscape of data science, A/B testing stands as a cornerstone for data-driven decision-making. Businesses across industries, from e-commerce to healthcare, rely on experimentation to validate hypotheses, optimize user experiences, and drive growth. However, the traditional framework of A/B testing, often predicated on independent and identically distributed (i.i.d.) observations, frequently falls short when confronted with time-dependent data. Metrics like website traffic, sales volume, user engagement, or patient outcomes are inherently sequential, exhibiting trends, seasonality, and autocorrelation. Ignoring these temporal dynamics can lead to biased results, incorrect statistical inferences, and suboptimal strategic choices, rendering even well-intentioned experiments misleading. This critical challenge necessitates a sophisticated approach: Time Series Analysis: Advanced A/B Testing Methods.

The advent of big data and real-time analytics has amplified the need for robust methodologies that can accurately measure the causal impact of interventions in a time series context. Standard A/B tests assume that observations within and between groups are independent, an assumption violated when data points are influenced by their past values. This article delves into cutting-edge techniques that integrate time series analysis principles into the experimentation framework, enabling data scientists to conduct more reliable and insightful A/B tests. We will explore methods designed to address autocorrelation, seasonality, and long-term effects, moving beyond basic comparisons to uncover genuine causal relationships. By embracing these advanced strategies, organizations can unlock deeper insights, refine their experimentation processes, and make truly data-informed decisions in a dynamic world, ensuring the validity and impact of their A/B testing efforts.

The Unique Challenges of A/B Testing with Time Series Data

Traditional A/B testing methods, while powerful for many use cases, encounter significant hurdles when applied directly to metrics that are time-dependent. The underlying assumptions of these methods—primarily independence of observations and often, normality—are frequently violated by time series data, leading to inflated Type I error rates or reduced statistical power. Understanding these fundamental challenges is the first step towards adopting more appropriate and robust advanced A/B testing methods.

Autocorrelation and Seasonality

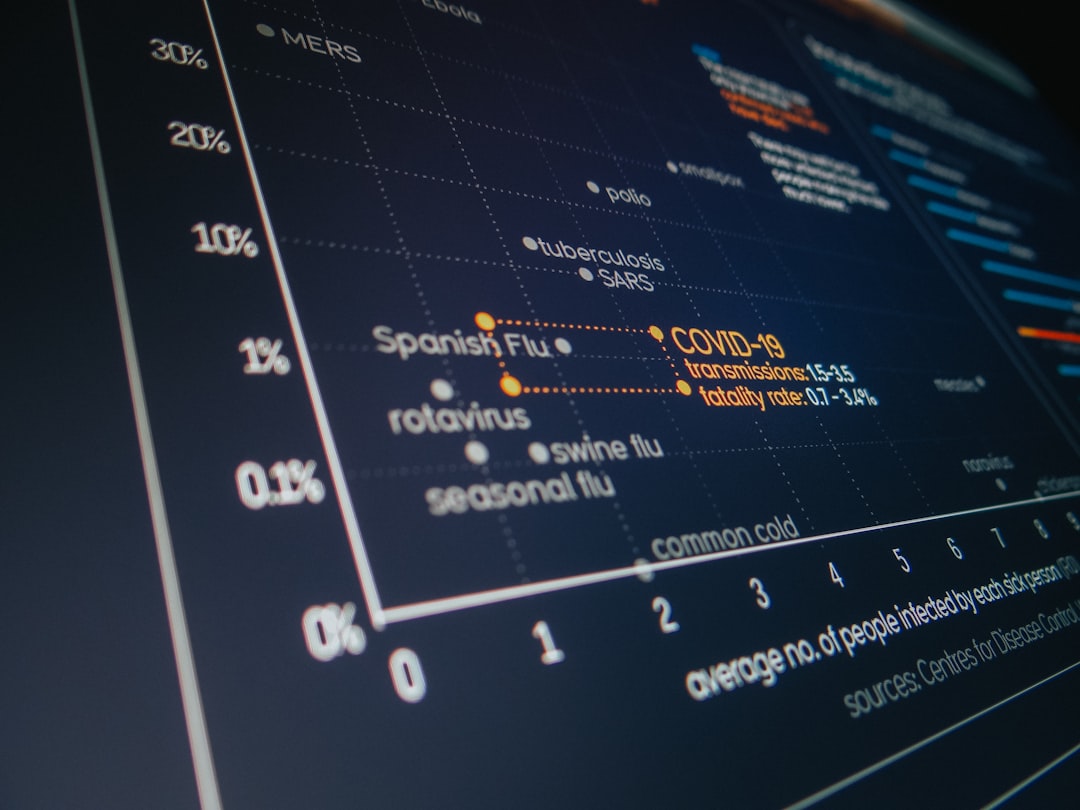

One of the most pervasive issues in time series A/B testing is autocorrelation. Autocorrelation refers to the correlation of a time series with its own past values. For instance, website traffic on a Monday is often correlated with traffic on the previous Sunday or even the previous Monday. When observations within a treatment or control group are autocorrelated, the effective sample size is smaller than the nominal sample size, leading to an underestimation of variance and an overestimation of statistical significance. This can result in declaring a false positive, incorrectly concluding that a treatment effect exists when it does not.

Seasonality, a specific type of autocorrelation, describes predictable patterns that repeat over a fixed period (e.g., daily, weekly, monthly). Retail sales often peak on weekends, and app usage might surge in the evenings. Failing to account for these regular fluctuations means that observed differences between groups might be attributed to the intervention, when in fact they are merely part of the natural seasonal rhythm. This makes it difficult to isolate the true impact of an experiment.

Carryover Effects and Network Externalities

Another critical challenge arises from carryover effects, also known as lagged effects. These occur when the impact of an intervention is not immediate but unfolds over time, or when the effect of exposure to a treatment persists even after the exposure has ceased. For example, a new feature introduced in an application might initially show a small effect, but user adoption and engagement could grow weeks later as word-of-mouth spreads. Traditional A/B tests, designed for instantaneous or short-term effects, might miss these delayed impacts or misattribute them to other factors.

Network externalities further complicate time series analysis for experimentation. In social networks, online marketplaces, or even ride-sharing platforms, the value of a product or service increases as more people use it. An A/B test in such an environment where users interact can suffer from \"spillover\" or \"contamination.\" If users in the control group are influenced by users in the treatment group (e.g., seeing their friends use a new feature), the control group no longer serves as a true baseline, blurring the distinction between experimental conditions and undermining the validity of the results. This is especially pertinent for experiments involving recommendations or viral features.

The Stability Assumption Violation

Traditional A/B testing assumes a stable environment where the underlying data generating process remains constant during the experiment. However, real-world systems are rarely static. External events such as holidays, marketing campaigns, competitor actions, or even global economic shifts can significantly alter the baseline behavior of metrics during an experiment. If such an event occurs during an A/B test, it can confound the results, making it difficult to ascertain whether observed changes are due to the experimental treatment or the external factor. This violation of the stability assumption requires methods that can disentangle the intervention\'s effect from concurrent external influences, moving towards more robust causal inference A/B testing.

Interrupted Time Series (ITS) Analysis for A/B Testing

When randomized controlled trials (RCTs) are not feasible or ethical, or when an intervention has been rolled out to an entire population at a specific point in time, Interrupted Time Series (ITS) A/B test analysis emerges as a powerful quasi-experimental design. ITS is particularly well-suited for evaluating the impact of an intervention or policy change by observing a single group before and after the intervention, while accounting for pre-existing trends and seasonality in the data. This makes it a valuable tool for time series analysis for experimentation when a true control group is unavailable or impractical.

Core Methodology and Data Requirements

The fundamental idea behind ITS is to model the outcome variable over time, both before and after an intervention, and then to assess whether the intervention caused a significant change in the level or slope of the time series. The \"interruption\" refers to the point in time when the intervention was introduced. A typical ITS model can be represented by a regression equation:

Y_t = β₀ + β₁ Time_t + β₂ Intervention_t + β₃ * Time_after_intervention_t + ε_t

Y_t: The outcome variable at time t.β₀: The baseline level of the outcome before the intervention.β₁: The pre-intervention trend (change in Y_t per unit of time before the intervention).Intervention_t: A dummy variable (0 before intervention, 1 after intervention). β₂ represents the immediate change in the level of the outcome after the intervention.Time_after_intervention_t: A continuous variable representing time since the intervention (0 before intervention, 1 for the first time point after intervention, 2 for the second, etc.). β₃ represents the change in the slope of the outcome after the intervention compared to the pre-intervention slope.ε_t: The error term, which often requires accounting for autocorrelation.

For ITS to be effective, a sufficient number of data points are needed both before and after the intervention, typically at least 8-10 time points in each segment, to accurately estimate the pre-intervention trend and subsequent changes. The data should ideally be equally spaced. It\'s crucial to ensure that no other significant confounding events occurred simultaneously with the intervention, as ITS assumes the intervention is the primary cause of any observed change.

Practical Application and Quasi-Experimental Design

Consider a scenario where a streaming service implements a new recommendation algorithm across its entire user base on a specific date. A traditional A/B test might not be feasible if the change is systemic. ITS analysis can be applied to evaluate its impact on metrics like \"average watch time per user\" or \"number of new subscriptions.\"

Example: Evaluating a Feature Rollout on User Engagement

A software company rolls out a major UI redesign to all users on January 1, 2024. They want to assess its impact on daily active users (DAU). They collect DAU data for 6 months before and 6 months after the rollout. The ITS model would look for:

- An immediate jump (

β₂) in DAU after Jan 1, indicating a sudden positive or negative reaction. - A change in the trend (

β₃) of DAU after Jan 1, indicating whether the redesign led to a sustained increase or decrease in growth rate.

This quasi-experimental approach, while lacking the randomization of a true A/B test, provides strong evidence for causality by demonstrating a statistically significant change coinciding with the intervention, after controlling for pre-existing trends. It\'s a key method in the arsenal of advanced A/B testing methods when full randomization is not an option.

Limitations and Considerations

Despite its strengths, ITS analysis has limitations. The primary concern is the potential for confounding by other contemporaneous events. If another significant event (e.g., a major competitor launch, a holiday season, a marketing blitz) occurs exactly at the same time as the intervention, it becomes challenging to disentangle its effect from the intervention\'s effect. Researchers must diligently investigate and document any such concurrent events.

Another consideration is the choice of statistical model for the error term (ε_t). Simple ordinary least squares (OLS) regression might be insufficient if there\'s significant autocorrelation in the residuals. Techniques like ARIMA models or generalized least squares (GLS) can be employed to account for correlated errors, ensuring more accurate standard errors and p-values. Furthermore, the selection of the number of pre- and post-intervention data points is crucial; too few can lead to unstable estimates, while too many might dilute the immediate impact of the intervention. Careful thought on the frequency of data collection and the duration of the observation period is essential for reliable ITS results in time series A/B testing.

Causal Impact and Bayesian Structural Time Series (BSTS)

When traditional A/B testing is not feasible, or when an intervention is applied to a specific unit (e.g., a region, a marketing channel, or a single large customer) and a direct control group is unavailable, methods like Causal Impact analysis, powered by Bayesian Structural Time Series (BSTS) models, provide a robust alternative. This approach is particularly powerful for causal inference A/B testing in scenarios where you need to estimate what would have happened in the absence of an intervention for a specific target.

The Synthetic Control Approach

The core idea behind Causal Impact and BSTS is to construct a \"synthetic control\" for the treated unit. Instead of relying on a randomly assigned control group, a synthetic control is a weighted combination of other untreated units (often called \"donor units\") that closely matches the treated unit\'s behavior in the pre-intervention period. The goal is to create a counterfactual: an estimate of what the treated unit\'s outcome would have been if the intervention had not occurred.

For example, if a new pricing strategy is implemented in one specific city (the treated unit), a synthetic control can be built by identifying other cities that had similar sales patterns, demographics, and market conditions prior to the pricing change. These donor cities are then weighted to create a synthetic city whose pre-intervention sales trajectory closely mimics that of the treated city. The difference between the actual sales in the treated city and the predicted sales of the synthetic control in the post-intervention period is then attributed to the causal effect of the pricing strategy.

This approach addresses the challenge of identifying a suitable control group in unique situations, allowing for powerful time series analysis for experimentation where traditional randomization is impractical.

Bayesian Structural Time Series (BSTS) Model

The Causal Impact package, prominently developed by Google, leverages Bayesian Structural Time Series (BSTS) models to formalize the synthetic control approach. BSTS models are flexible and powerful, capable of capturing various time series components such as trend, seasonality, and the influence of exogenous covariates. A BSTS model typically includes:

- Local Trend: Accounts for long-term changes in the series.

- Seasonal Component: Captures recurring patterns (e.g., daily, weekly, yearly).

- Regression Component: Incorporates the influence of other predictor variables (covariates) from the donor units.

During the pre-intervention period, the BSTS model learns the relationship between the treated unit and its donor units, along with its own internal time series dynamics. It then uses this learned relationship to forecast the counterfactual outcome for the treated unit during the post-intervention period. The Bayesian framework provides probabilistic estimates of the causal effect, including credible intervals, which are more intuitive for interpretation than frequentist confidence intervals.

The BSTS model\'s strength lies in its ability to automatically select and weight the most relevant donor units and covariates, effectively building a robust counterfactual. This makes it a sophisticated tool within advanced A/B testing methods for complex time-series scenarios.

Interpreting Causal Impact Results

The output of a Causal Impact analysis typically consists of several plots and statistical summaries:

- Time Series Plot: Shows the actual observed outcome, the predicted counterfactual (synthetic control) outcome, and the difference between the two (the causal effect) over time. This visual representation helps to quickly grasp the magnitude and duration of the intervention\'s impact.

- Cumulative Impact Plot: Displays the cumulative sum of the difference between the observed and predicted outcomes, providing a clear picture of the total effect of the intervention over the post-intervention period.

- Summary Statistics: Provides a point estimate of the causal effect, along with a credible interval and a posterior probability of a non-zero effect. For example, it might state that the intervention led to a 15% increase in sales with a 95% credible interval of [10%, 20%], and a posterior probability of 99% that the effect is positive.

Example: Website Redesign Impact on Conversion Rate

Imagine a company redesigns its website in Country A. They cannot run a traditional A/B test because the redesign is rolled out simultaneously. They can use Causal Impact. They gather conversion rate data for Country A (treated unit) and several other similar countries (donor units) for a period before and after the redesign. The BSTS model would then predict what Country A\'s conversion rate would have been without the redesign, based on the behavior of the donor countries. The observed conversion rate in Country A after the redesign is compared to this predicted counterfactual. If the actual conversion rate significantly deviates from the predicted one, it suggests a causal impact. This is a prime application for causal inference A/B testing, particularly useful for policy or large-scale product changes.

| Feature | Traditional A/B Test | Interrupted Time Series (ITS) | Causal Impact (BSTS) |

|---|

| Control Group | Randomly assigned | Implicit (pre-intervention period) | Synthetic control (weighted donor units) |

| Randomization | Yes | No (quasi-experimental) | No (quasi-experimental) |

| Data Requirement | Independent observations | Time series, pre/post intervention | Time series, pre/post intervention + donor units |

| Primary Use Case | User-level feature changes | System-wide policy/feature rollouts | Unit-level intervention (e.g., specific city, channel) |

| Handles Time-Dependency | No (assumes i.i.d.) | Yes (models trend/level change) | Yes (models trend, seasonality, covariates) |

| Causal Inference Strength | High (if well-randomized) | Moderate (susceptible to confounders) | High (robust counterfactual) |

Sequential A/B Testing for Dynamic Time Series Data

Traditional A/B tests often rely on a fixed sample size and duration, determined upfront by power analysis. However, in dynamic environments where data streams continuously and outcomes evolve over time, such fixed designs can be inefficient or even misleading. Sequential A/B testing with time series data offers a more agile and often more ethical approach, allowing for continuous monitoring and early stopping of experiments once a statistically significant result (or lack thereof) is detected.

Adapting Sequential Methods for Time-Dependent Metrics

Sequential testing involves analyzing data as it accumulates, with predefined stopping rules that dictate when to conclude the experiment. This contrasts with fixed-horizon tests, where data is only analyzed once at the end. For time series data, adapting sequential methods requires careful consideration of autocorrelation and other temporal dependencies. Directly applying off-the-shelf sequential tests designed for i.i.d. data can lead to inflated Type I error rates because each \"look\" at the data is not independent. Advanced sequential methods, such as those based on Group Sequential Designs (GSD) or Always Valid Inference (AVI) frameworks (e.g., using confidence sequences), are designed to control the overall Type I error rate even with multiple interim analyses. When dealing with time series, these methods can be modified to account for the effective sample size or adjusted variance estimates that arise due to autocorrelation, ensuring valid inference despite the sequential nature of analysis.

Advantages of Early Stopping and Continuous Monitoring

The primary advantage of sequential A/B testing is its efficiency. By allowing for early stopping, businesses can:

1. Accelerate Learning: Experiments that show a strong positive or negative effect can be concluded faster, allowing successful changes to be deployed sooner or detrimental changes to be rolled back quickly, minimizing opportunity cost or potential harm.

2. Optimize Resource Allocation: Experiments that are clearly not showing a significant effect can also be stopped early, freeing up resources (e.g., traffic, engineering effort) for other tests.

3. Ethical Considerations: For experiments that might negatively impact user experience or revenue, early detection of negative effects is crucial for ethical reasons, preventing prolonged exposure of users to a suboptimal treatment.

4. Adapt to Dynamic Environments: In rapidly changing markets, waiting for a fixed duration might mean the market conditions or user behaviors have shifted, making the initial hypothesis less relevant. Sequential testing allows for quicker adaptation.

Continuous monitoring, inherent in sequential designs, also provides richer insights into the evolution of treatment effects over time, which is particularly valuable for understanding dynamic metrics in time series A/B testing.

Statistical Power and Sample Size Considerations

While sequential testing offers efficiency, it comes with specific statistical design considerations. The stopping boundaries (the thresholds for significance at each interim analysis) must be carefully calibrated to maintain the desired overall Type I error rate (alpha). Methods like the O\'Brien-Fleming or Pocock boundaries are commonly used in GSDs to adjust these thresholds. For sequential A/B testing with time series data, power analysis becomes more complex. The presence of autocorrelation means that effective sample size is smaller than the observed sample size. Therefore, when designing a sequential test, it\'s crucial to either:

1. Adjust Sample Size: Inflate the required sample size to account for the loss of independent observations due to autocorrelation.

2. Model Autocorrelation: Incorporate time series models (e.g., ARIMA) into the sequential analysis framework to properly estimate the variance and thus the statistical significance at each look.

Failing to account for autocorrelation can lead to stopping too early when there is no real effect, or conversely, continuing an experiment longer than necessary. Modern experimentation platforms are increasingly integrating these advanced sequential methods, making them more accessible for robust advanced A/B testing methods.

Geo-Experimental A/B Testing and Spatial-Temporal Designs

In many business contexts, especially for brick-and-mortar retail, ride-sharing, food delivery, or localized digital services, users and their behaviors are geographically interdependent. Traditional user-level A/B testing can suffer from severe spillover effects where treatment group users influence control group users in the same vicinity. This invalidates the independence assumption and makes it impossible to isolate the true treatment effect. Geo-experimental A/B testing, a specialized form of time series A/B testing, addresses this by randomizing at a geographic level rather than an individual user level.

Defining Geographic Units for Experimentation

The first critical step in a geo-experiment is to define appropriate geographic units. This could be cities, zip codes, census tracts, or even custom polygons. The choice of unit depends on the nature of the intervention and the expected range of spillover effects. For example, a marketing campaign for a local restaurant might use zip codes as units, while a change in a national delivery service algorithm might require larger city-level units.

Once units are defined, they are assigned to treatment and control groups. The goal is to create groups of geographic units that are comparable in terms of relevant metrics (e.g., historical sales, population density, demographics) and isolated enough to minimize cross-group contamination. This involves careful matching and stratification techniques to ensure balance across groups, similar to how individual users are balanced in a traditional A/B test. The temporal component comes into play as historical time series data for these geographic units are used for matching and baseline comparisons.

Mitigating Spillover Effects in Geo-Experiments

The primary motivation for geo-experiments is to mitigate spillover effects. By randomizing at a geographic level, the entire population within a geographic unit receives either the treatment or the control. This ensures that interactions within the unit are consistent with the assigned condition, thereby minimizing contamination across experimental groups. However, some level of spillover might still occur, especially in adjacent units or through broader market dynamics.

To further reduce spillover, researchers often employ buffer zones between treatment and control units, or they might exclude certain highly interconnected units from the experiment. The design might also consider network structures, grouping interconnected geographic areas together to ensure that the treatment effect propagates within a group rather than across groups. Understanding these spatial dependencies is crucial for valid causal inference A/B testing in geo-experiments.

Advanced Matching and Synthetic Control for Geo-Experiments

Pure randomization of geographic units can be challenging, especially when the number of units is small, or when certain units are inherently unique. This is where advanced A/B testing methods like matching and synthetic control become invaluable for geo-experiments:

- Matched Pair Designs: Geographic units can be paired based on their historical time series performance and other relevant characteristics. One unit from each pair is then randomly assigned to treatment, and the other to control. This helps improve balance and statistical power.

- Synthetic Control for Geo-Experiments: As discussed earlier, synthetic control can be adapted for geo-experiments. If an intervention is rolled out to a single city or a small cluster of cities, a synthetic control group can be constructed from other similar, untreated cities. This allows for robust estimation of the causal effect without requiring a large number of randomized geographic units. The BSTS model is particularly effective here, as it naturally handles the time series nature of the data and the influence of covariates from donor regions.

For example, a ride-sharing company wants to test a new incentive program for drivers in City X. Instead of randomly assigning drivers within City X (which would lead to severe spillover as drivers interact), they designate City X as the treatment group. They then use historical ride data from similar cities (Y, Z, W) to construct a synthetic control for City X. By comparing the actual driver retention in City X post-intervention to the synthetic control\'s predicted retention, they can estimate the causal impact. This is a powerful application of time series analysis for experimentation in a geographically constrained context.

Advanced Statistical Considerations and Pitfalls in Time Series A/B Testing

Conducting robust A/B tests with time series data demands a deeper understanding of statistical principles beyond what is typically required for i.i.d. data. Ignoring these nuances can lead to invalid conclusions, compromising the integrity of time series A/B testing. This section highlights critical statistical considerations and common pitfalls.

Stationarity, Cointegration, and Unit Roots

A fundamental concept in time series analysis is stationarity. A time series is stationary if its statistical properties (mean, variance, autocorrelation) do not change over time. Many statistical tests and models assume stationarity. Non-stationary series, particularly those with trends (unit roots), can lead to spurious regressions where two unrelated series appear to be correlated simply because they both have trends. When comparing two time series (e.g., treatment vs. control group metrics), it\'s crucial to assess their stationarity. If both series are non-stationary but share a common long-term trend, they might be cointegrated. Cointegration implies a long-run equilibrium relationship between series, and deviations from this equilibrium can be modeled. In time series A/B testing, understanding these properties is vital for selecting appropriate statistical models (e.g., differencing non-stationary series, using error correction models for cointegrated series) to ensure that observed differences are truly due to the intervention and not just shared non-stationary behavior.

Multiple Testing and False Discovery Rate Control

In advanced A/B testing, especially when conducting continuous monitoring in sequential tests or analyzing multiple metrics simultaneously, the problem of multiple comparisons arises. Each statistical test carries a risk of a Type I error (false positive). If you perform many tests, the probability of at least one false positive increases dramatically. For instance, with an alpha of 0.05, if you run 20 independent tests, there\'s a 64% chance of at least one false positive.

For advanced A/B testing methods, it\'s crucial to control the family-wise error rate (FWER) or the false discovery rate (FDR).

- FWER Control: Methods like Bonferroni correction or Holm-Bonferroni adjust p-values to ensure that the probability of making any false positive is below a specified alpha. These are often too conservative, reducing statistical power.

- FDR Control: Methods like Benjamini-Hochberg aim to control the expected proportion of false positives among all rejected null hypotheses. This is often preferred in exploratory analysis or when many metrics are being tracked, as it offers a better balance between Type I error control and statistical power.

Applying these corrections is especially critical in sequential A/B testing with time series data, where interim analyses are essentially multiple looks at the accumulating data, increasing the risk of false positives if not properly accounted for in the stopping rules.

Robustness Checks and Sensitivity Analysis

Given the complexity of time series data and the models used in advanced A/B testing, it\'s imperative to perform robustness checks and sensitivity analyses. These steps help to build confidence in the results by evaluating how sensitive the conclusions are to different modeling assumptions or data perturbations. Examples include:

- Varying Model Specifications: Testing different lags for autocorrelation, different seasonal components, or different exogenous covariates in your time series models. For Causal Impact, this might involve trying different sets of donor units or different prior distributions.

- Different Time Windows: Analyzing the data using slightly different pre- and post-intervention periods to see if the effect remains consistent.

- Subgroup Analysis: Examining if the treatment effect varies across different segments of the population.

- Permutation Tests/Bootstrapping: Non-parametric methods that can provide robust p-values or confidence intervals without strong distributional assumptions, particularly useful when underlying assumptions of parametric tests might be violated.

These checks are vital for ensuring that the observed effects are not merely artifacts of specific modeling choices but represent genuine impacts of the intervention. They strengthen the validity of causal inference A/B testing and provide a more comprehensive understanding of the experiment\'s outcomes.

Practical Implementation and Tooling for Advanced Time Series A/B Testing

Implementing advanced A/B testing methods for time series data requires not just statistical knowledge but also robust data infrastructure, programming skills, and a strategic approach to experimentation. The complexity of these methods means that specialized tools and platforms are often necessary to streamline the process and ensure accuracy.

Leveraging Open-Source Libraries and Platforms

The data science ecosystem offers powerful open-source libraries that facilitate the application of advanced time series A/B testing methods:

- Python\'s

statsmodels and Prophet:statsmodels provides extensive functionalities for time series analysis, including ARIMA, SARIMAX, and Generalized Least Squares (GLS) models, which are crucial for handling autocorrelation in ITS designs.- Facebook\'s

Prophet is excellent for forecasting and detecting anomalies in time series data with strong seasonal components, making it useful for establishing baselines and predicting counterfactuals in a more lightweight causal impact-style analysis.

- R\'s

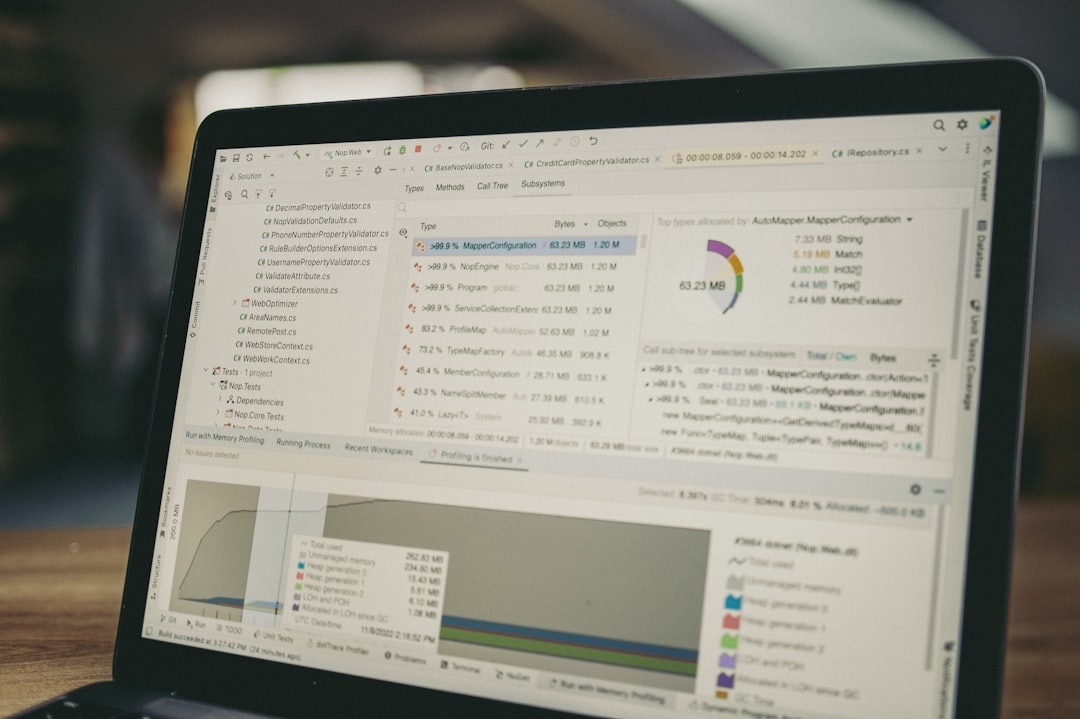

CausalImpact Package: Developed by Google, this R package (with a Python port available) directly implements the Bayesian Structural Time Series (BSTS) model for Causal Impact analysis. It\'s user-friendly and provides comprehensive outputs, making it the go-to tool for estimating the causal effect of an intervention on a single unit using synthetic control. - Python\'s

scipy.stats and statsmodels for Sequential Testing: While direct sequential A/B testing packages for time series are less common, the statistical primitives in these libraries can be combined to build custom sequential analysis frameworks, especially for group sequential designs. - PyMC3/PyMC for Bayesian Modeling: For highly customized Bayesian approaches to time series A/B testing, probabilistic programming libraries like PyMC3 (or its successor PyMC) offer the flexibility to build bespoke models for complex causal inference scenarios.

These tools empower data scientists to move beyond basic t-tests and apply sophisticated time series analysis for experimentation.

Building an Experimentation Platform for Time Series

For organizations conducting frequent time series A/B tests, building or adopting an experimentation platform tailored for such analyses is a strategic investment. Such a platform should ideally:

- Automate Data Ingestion and ETL: Seamlessly collect and preprocess time-series metrics from various sources.

- Support Advanced Experiment Designs: Offer built-in support for ITS, Causal Impact, geo-experiments, and sequential testing, abstracting away much of the underlying statistical complexity.

- Handle Randomization and Assignment: For geo-experiments, provide robust mechanisms for geographic unit assignment and balancing.

- Provide Real-time Monitoring and Alerting: Crucial for sequential testing, allowing for immediate detection of significant effects or issues.

- Integrate with Visualization Tools: Generate clear, interpretable plots and dashboards for experiment results.

- Manage Metadata: Track experiment configurations, start/end dates, intervention details, and other relevant information.

Companies like Google, Netflix, and Airbnb have developed sophisticated internal experimentation platforms that incorporate many of these advanced A/B testing methods, demonstrating their value in a data-intensive environment.

Data Infrastructure and Monitoring

Robust data infrastructure is the backbone of any advanced A/B testing framework. This includes:

- High-Resolution Data Collection: Capturing time series data at a sufficiently granular level (e.g., hourly, daily) to detect subtle effects and properly model temporal patterns.

- Data Warehousing and Lakes: Storing historical time series data efficiently and accessibly for baseline comparisons, matching, and model training.

- Automated Data Quality Checks: Implementing systems to monitor data freshness, completeness, and accuracy, as missing or erroneous time series data can severely compromise experiment validity.

- Monitoring for External Confounders: Establishing mechanisms to track external events (e.g., marketing campaigns, news cycles, competitor actions) that could confound experiment results. This contextual information is vital for interpreting outcomes in causal inference A/B testing.

Without a solid data foundation, even the most statistically sound advanced A/B testing methods will yield unreliable results. Continuous monitoring of both the experimental metrics and the underlying data pipelines is paramount for successful and trustworthy experimentation.

Frequently Asked Questions (FAQ)

Q1: When should I use advanced time series A/B testing methods instead of traditional A/B tests?

You should consider advanced time series methods when your key performance indicators (KPIs) exhibit strong temporal dependencies (e.g., trends, seasonality, autocorrelation), when you cannot randomize users (e.g., system-wide rollouts, geo-specific interventions), or when there\'s a risk of significant spillover effects between treatment and control groups. Traditional A/B tests assume independent observations, which is often violated by time series data, leading to incorrect statistical conclusions.

Q2: What is the main difference between Interrupted Time Series (ITS) and Causal Impact (BSTS)?

Both ITS and Causal Impact are quasi-experimental methods for causal inference A/B testing on time series data. ITS typically assesses the impact of an intervention on a single group by comparing its pre- and post-intervention trends and levels, assuming no other significant confounders. Causal Impact, using Bayesian Structural Time Series (BSTS), goes further by creating a \"synthetic control\" from similar, untreated units to estimate what would have happened in the absence of the intervention, providing a more robust counterfactual in scenarios where a direct control group is absent.

Q3: How do carryover effects impact A/B testing with time series data?

Carryover effects occur when the impact of a treatment is delayed or persists beyond the initial exposure. In time series A/B testing, these effects can lead to underestimation of the true treatment impact if the experiment is stopped too early, or misattribution of long-term changes if not properly modeled. Advanced methods need to account for these lagged effects, often requiring longer observation periods or dynamic models to capture the full temporal unfolding of the intervention\'s influence.

Q4: Can I combine these advanced methods with traditional A/B testing?

Absolutely. These advanced A/B testing methods are not mutually exclusive but can complement traditional A/B testing. For instance, you might run a user-level A/B test (traditional) on a new feature, but then use ITS or Causal Impact to analyze the system-wide impact of rolling out that feature to everyone. Geo-experiments might use traditional A/B testing logic within geographic units if appropriate, but the randomization is at the geo-level. Understanding the limitations of each method allows for a more comprehensive experimentation strategy.

Q5: What are the biggest pitfalls to avoid in advanced time series A/B testing?

Key pitfalls include:

- Ignoring Autocorrelation: Leads to inflated Type I errors. Always account for it in your models.

- Confounding Events: Failing to identify and control for external events that coincide with your intervention.

- Insufficient Data: Not having enough pre- and post-intervention data points for ITS or enough relevant donor units for Causal Impact.

- Ignoring Multiple Comparisons: Not adjusting p-values when performing multiple statistical tests, especially in sequential designs.

- Lack of Robustness Checks: Not validating your model assumptions or testing sensitivity to different specifications.

Addressing these pitfalls ensures more reliable and trustworthy results in

time series analysis for experimentation.

Q6: How do machine learning models contribute to advanced time series A/B testing?

Machine learning models enhance advanced A/B testing methods by improving forecasting accuracy for counterfactuals (e.g., using LSTMs or Transformers for complex time series patterns), identifying optimal donor units for synthetic controls, detecting anomalies that might confound experiments, and developing more sophisticated randomization schemes for geo-experiments. They can also be used to model and predict carryover effects or network externalities, providing deeper insights into the dynamics of treatment impact.

Conclusion and Recommendations

The journey from traditional A/B testing to incorporating advanced A/B testing methods rooted in time series analysis marks a significant evolution in data-driven experimentation. As digital products and services become increasingly complex and interconnected, and data accumulates with inherent temporal dependencies, relying solely on methods designed for i.i.d. observations is no longer sufficient. We have explored how challenges like autocorrelation, seasonality, carryover effects, and network externalities demand sophisticated solutions such as Interrupted Time Series (ITS), Causal Impact with Bayesian Structural Time Series (BSTS), Sequential A/B Testing, and Geo-Experimental Designs.

These methodologies empower data scientists to unlock deeper, more reliable insights into the true causal impact of interventions, even in scenarios where full randomization is impossible or impractical. By constructing robust counterfactuals, accounting for temporal dynamics, and mitigating spillover effects, organizations can move beyond surface-level observations to understand the intricate mechanisms through which changes influence user behavior and business outcomes. The integration of advanced statistical modeling, coupled with robust data infrastructure and specialized tooling, forms the bedrock of a modern experimentation strategy.

Looking ahead to 2024-2025, the field will likely see further advancements, including more seamless integration of machine learning for forecasting and anomaly detection, enhanced automation in experimentation platforms, and increasingly sophisticated methods for understanding complex network effects. For any organization committed to rigorous, data-informed decision-making, embracing these time series A/B testing techniques is not merely an advantage but a necessity. It represents a commitment to scientific rigor, enabling smarter product development, more effective marketing strategies, and ultimately, sustained growth in an ever-changing digital landscape. The future of experimentation is dynamic, and our methods must evolve to match its pace, ensuring that every decision is backed by robust causal inference A/B testing.

---

Site Name: Hulul Academy for Student Services

Email: info@hululedu.com

Website: hululedu.com