Modern ETL Processes Tools and Their Applications in Industry: Effective Data Integration for the Digital Age

In an era defined by an unprecedented explosion of data, organizations across every sector are grappling with the challenge of transforming raw, disparate information into actionable intelligence. The sheer volume, velocity, and variety of data generated daily—from transactional systems and IoT devices to social media feeds and external market indicators—present both an immense opportunity and a significant hurdle. Without effective mechanisms to consolidate, cleanse, and prepare this data, its potential remains largely untapped, leaving businesses to navigate complex landscapes with incomplete or inconsistent insights. This is precisely where Extract, Transform, Load (ETL) processes emerge as the indispensable backbone of modern data ecosystems. Far from being a mere technical chore, ETL is the critical bridge that connects data sources to data destinations, enabling robust analytics, advanced machine learning models, and ultimately, informed decision-making.

Historically, ETL was often a laborious, custom-coded, batch-oriented process, struggling to keep pace with the dynamic demands of a data-intensive world. However, the last decade, particularly moving into 2024-2025, has witnessed a profound evolution in ETL methodologies and tooling. Modern ETL processes are now characterized by their agility, scalability, real-time capabilities, and cloud-native architectures, seamlessly integrating with big data platforms and artificial intelligence frameworks. These advancements have democratized data integration, allowing organizations to ingest vast datasets, perform complex transformations on the fly, and deliver high-quality, ready-to-use data to business users and data scientists with unprecedented efficiency. This article delves deep into the landscape of modern ETL tools, exploring their sophisticated features, diverse applications across various industries, and the strategic imperative they represent for any organization striving for data-driven excellence.

The Evolution of ETL: From Batch to Real-time and Cloud-Native

The journey of Extract, Transform, Load (ETL) reflects the broader evolution of data management itself, moving from rudimentary, resource-intensive operations to sophisticated, highly automated, and distributed processes. Understanding this trajectory is crucial for appreciating the capabilities and strategic importance of modern ETL tools in today\'s data-driven landscape. The demands of big data, cloud computing, and real-time analytics have fundamentally reshaped how data is integrated and prepared for consumption.

Traditional ETL Architectures and Limitations

In its nascent stages, ETL was predominantly a batch-oriented process, designed for structured data residing in relational databases. Data extraction would typically occur during off-peak hours, often overnight, to minimize impact on operational systems. The transformation logic, frequently hand-coded or managed through proprietary scripts, involved complex SQL queries, stored procedures, and custom programming to clean, enrich, aggregate, and reformat data. Finally, the loaded data would populate data warehouses for business intelligence reporting. While effective for its time, this traditional approach faced significant limitations:

- Scalability Challenges: Handling ever-growing data volumes became a bottleneck, requiring substantial hardware upgrades and manual tuning.

- Latency: The batch nature meant that insights were always hours, if not days, old, rendering them less useful for real-time decision-making.

- Complexity and Maintenance: Custom code was difficult to maintain, debug, and scale, often leading to \"ETL hell\" with intricate dependencies.

- Limited Data Types: Primarily designed for structured data, traditional ETL struggled with semi-structured (JSON, XML) and unstructured data (text, images, video).

- Resource Intensive: Required significant compute and storage resources, often duplicating data in staging areas.

The Rise of Cloud ETL and ELT Paradigms

The advent of cloud computing dramatically reshaped the ETL landscape. Cloud platforms (AWS, Azure, GCP) offered elastic scalability, pay-as-you-go pricing, and managed services that abstracted away infrastructure complexities. This gave rise to \"Cloud ETL solutions,\" which leverage the cloud\'s inherent advantages. More significantly, the power of cloud data warehouses and data lakes, capable of storing and processing massive amounts of raw data cheaply and efficiently, spurred the emergence of the ELT (Extract, Load, Transform) paradigm.

In ELT, data is first extracted from sources and loaded directly into a target data lake or data warehouse in its raw form. The transformation then occurs within the powerful computational environment of the cloud data platform itself. This approach offers several benefits:

- Speed and Agility: Data is available for analysis much faster, as transformation doesn\'t delay loading.

- Flexibility: Raw data is preserved, allowing for multiple transformation pathways and re-transformation as business needs evolve without re-extracting.

- Leveraging Cloud Compute: Utilizes the immense parallel processing capabilities of cloud data warehouses (e.g., Snowflake, BigQuery, Redshift) for efficient transformations.

- Simplicity: Often simplifies the initial ingestion process, as less pre-processing is required before loading.

Real-time Data Ingestion and Processing

The demand for immediate insights has propelled real-time ETL processes from a niche requirement to a mainstream necessity. Organizations increasingly need to react to events as they happen—detecting fraud, personalizing customer experiences, monitoring system health, or optimizing supply chains in real-time. Modern ETL tools incorporate streaming data technologies to facilitate this.

- Stream Processing: Tools leverage technologies like Apache Kafka, Apache Flink, and Spark Streaming to continuously ingest and process data streams.

- Low Latency: Data transformations and aggregations occur almost instantaneously, delivering insights with minimal delay.

- Event-Driven Architectures: ETL pipelines can be triggered by specific events, ensuring responsiveness and efficiency.

The shift to real-time and cloud-native ETL/ELT represents a fundamental transformation, enabling businesses to unlock the true value of their data assets at an unprecedented pace and scale.

Key Characteristics of Modern ETL Tools

Modern ETL tools are a far cry from their predecessors, designed to tackle the complexities of big data, cloud environments, and the need for speed and agility. Their capabilities extend beyond simple data movement and transformation, encompassing aspects of data governance, automation, and user experience. Understanding these key characteristics is vital for selecting the right tools and designing effective data integration strategies for contemporary needs.

Scalability and Performance in Big Data Environments

One of the foremost challenges in modern data integration is handling the sheer volume and velocity of big data. Modern ETL tools are architected to scale horizontally, processing petabytes of data efficiently and cost-effectively. This is achieved through several mechanisms:

- Distributed Processing: Leveraging frameworks like Apache Spark, Hadoop, and Dask, these tools distribute processing across clusters of machines, enabling parallel execution of tasks.

- Cloud-Native Architectures: Built for elasticity, cloud ETL solutions can automatically provision and de-provision resources based on workload demands, ensuring optimal performance without over-provisioning.

- Optimized Data Formats: Support for columnar storage formats (e.g., Parquet, ORC) and optimized file partitioning significantly boosts query and processing speeds.

- In-memory Processing: Many tools utilize in-memory computation for faster data manipulation and aggregation, especially for real-time or near real-time scenarios.

This inherent scalability ensures that as data volumes grow, the ETL pipeline can expand to meet demand without requiring significant architectural overhauls or performance degradation.

Automation, Orchestration, and Workflow Management

Manual intervention in ETL processes is a bottleneck, prone to errors, and unsustainable at scale. Modern ETL tools prioritize automation and robust workflow management to streamline operations and enhance reliability:

- Automated Data Ingestion: Features like Change Data Capture (CDC) automatically identify and replicate only the changed data, reducing load times and resource consumption.

- Workflow Orchestration: Tools like Apache Airflow or built-in orchestrators allow users to define, schedule, and monitor complex data pipelines as Directed Acyclic Graphs (DAGs). This ensures tasks run in the correct sequence, with dependencies managed automatically.

- Error Handling and Retries: Sophisticated error detection mechanisms, automatic retries, and configurable alerts minimize pipeline failures and simplify troubleshooting.

- Metadata Management: Automated capture and management of metadata (data lineage, schema changes, transformation rules) improve data governance, auditing, and understanding of data flows.

By automating repetitive tasks and providing clear visibility into pipeline execution, these tools free up data engineers to focus on more complex data strategy and innovation.

Data Governance, Security, and Compliance Features

As data becomes a critical asset, ensuring its quality, security, and adherence to regulatory standards (like GDPR, HIPAA, CCPA) is paramount. Modern ETL tools integrate robust features for data governance and compliance:

- Data Masking and Anonymization: Capabilities to mask sensitive data during transformation or prior to loading into non-production environments.

- Access Control and Encryption: Integration with enterprise identity management systems (IAM) and robust encryption (at rest and in transit) ensure data security.

- Data Quality Checks: Built-in functionalities to validate data against predefined rules, identify anomalies, and cleanse inconsistent or erroneous entries. This includes profiling, validation, and enrichment.

- Auditing and Lineage: Comprehensive logging and tracking of data transformations, source-to-target mapping, and user activity provide full data lineage, crucial for compliance and debugging.

- Schema Evolution Management: Tools can intelligently handle changes in source schemas, automatically adapting transformations or alerting users, thus preventing pipeline breaks.

These features are not merely add-ons but core components that ensure data integrity, trustworthiness, and regulatory adherence throughout the data lifecycle.

Low-Code/No-Code Interfaces and Developer Productivity

The demand for data integration often outstrips the supply of highly specialized data engineers. Modern ETL tools address this by offering interfaces that cater to a broader range of users, from data analysts to business users, while also enhancing productivity for developers:

- Graphical User Interfaces (GUIs): Drag-and-drop interfaces allow users to design data pipelines visually, reducing the need for extensive coding.

- Pre-built Connectors: A rich library of connectors to various data sources (databases, SaaS applications, APIs, file systems) accelerates integration setup.

- Template and Reusability: Ability to create and reuse transformation templates, functions, and entire pipelines, fostering standardization and efficiency.

- Collaboration Features: Version control, shared workspaces, and approval workflows facilitate team collaboration on data projects.

- Scripting and Extensibility: While offering low-code options, most tools also provide robust APIs and support for custom scripting (Python, Scala, Java) to handle highly complex or unique transformation logic.

This blend of accessibility and power ensures that organizations can quickly build and deploy data pipelines, accelerating time-to-insight and empowering a wider range of personnel to work with data.

Leading Modern ETL Tools and Platforms

The market for modern ETL tools is vibrant and diverse, with solutions catering to various needs, from cloud-native ecosystems to open-source flexibility and commercial enterprise powerhouses. Selecting the right tool depends on factors such as existing infrastructure, budget, technical expertise, scalability requirements, and specific integration challenges. This section highlights some of the prominent players and categories dominating the modern ETL landscape in 2024-2025.

Cloud-Native Solutions (AWS Glue, Azure Data Factory, Google Cloud Dataflow)

Cloud providers offer fully managed, scalable, and integrated ETL services that are deeply embedded within their respective ecosystems. These solutions are ideal for organizations already leveraging a specific cloud platform or those looking for seamless integration with other cloud services like data lakes and data warehouses.

- AWS Glue: A serverless data integration service that makes it easy to discover, prepare, and combine data for analytics, machine learning, and application development. It automatically discovers schema, generates ETL code (Spark or Python), and runs it on a serverless Spark environment. It integrates tightly with Amazon S3, Redshift, Athena, and other AWS services.

- Azure Data Factory (ADF): Microsoft Azure\'s cloud-based data integration service that allows users to create, schedule, and orchestrate data pipelines. ADF provides a rich set of connectors, supports both code-free visual authoring and custom coding, and integrates well with Azure Synapse Analytics, Azure Databricks, and Azure Data Lake Storage. It\'s particularly strong for hybrid data integration scenarios.

- Google Cloud Dataflow: A fully managed service for executing Apache Beam pipelines. Dataflow is designed for both batch and stream processing, offering automatic scaling and resource management. It\'s highly optimized for large-scale data processing and integrates seamlessly with Google BigQuery, Cloud Storage, and other GCP services, making it a strong choice for real-time analytics and complex transformations.

Open-Source Powerhouses (Apache Airflow, Apache Nifi, Apache Spark)

Open-source tools provide flexibility, community support, and often cost advantages, making them popular choices for organizations with strong internal engineering teams and specific customization needs.

- Apache Airflow: While not an ETL tool itself, Airflow is a powerful open-source platform to programmatically author, schedule, and monitor data pipelines. It\'s widely used for orchestrating complex ETL workflows, managing dependencies, and providing a clear UI for monitoring job status. It\'s highly extensible and language-agnostic.

- Apache NiFi: Designed for automated data flow between systems, NiFi excels at moving and transforming data in real-time. It provides a web-based UI for creating, monitoring, and managing data flows with a drag-and-drop interface, making it particularly strong for data ingestion, routing, and basic transformations, especially for edge computing scenarios.

- Apache Spark: A unified analytics engine for large-scale data processing. Spark offers APIs in Python, Java, Scala, and R, and supports various workloads including SQL, streaming, machine learning, and graph processing. Its DataFrames API and distributed processing capabilities make it a de-facto standard for performing complex transformations (the \'T\' in ETL/ELT) on big data, often underlying many commercial and cloud-native ETL solutions.

Commercial Leaders (Talend, Informatica, Fivetran, Stitch, Matillion)

Commercial ETL tools often offer comprehensive features, extensive connector libraries, dedicated support, and enterprise-grade capabilities like advanced data governance and security, appealing to large enterprises with complex integration requirements.

- Talend: Offers a comprehensive suite of data integration and data governance products. Talend Data Fabric provides a unified platform for traditional ETL, cloud integration, big data, data quality, and master data management. It supports both graphical design and code generation, catering to various skill levels.

- Informatica PowerCenter / Cloud Data Integration: A long-standing leader in the enterprise ETL space. Informatica provides robust, scalable solutions for complex data integration challenges, offering extensive connectors, advanced data quality features, and strong metadata management. Their cloud-native offerings cater to modern cloud data warehousing needs.

- Fivetran: Specializes in automated data movement, focusing heavily on the \'EL\' part of ELT. Fivetran provides pre-built, fully managed connectors for hundreds of SaaS applications, databases, and file storage, automatically handling schema changes and ensuring data replication with minimal setup. It\'s favored for its simplicity and reliability.

- Stitch (Talend): Similar to Fivetran, Stitch offers a cloud-based data pipeline service that automates the extraction and loading of data from various sources into data warehouses and data lakes. It\'s known for its ease of use and broad connector library, often chosen by smaller teams or for specific departmental data integration needs.

- Matillion: A cloud-native ELT platform optimized for popular cloud data warehouses like Snowflake, Amazon Redshift, and Google BigQuery. Matillion provides a powerful, visual interface for data transformation, allowing users to build complex logic directly within their cloud data warehouse, leveraging its compute power.

Data Virtualization and Reverse ETL Tools

Beyond traditional ETL/ELT, other specialized tools are gaining prominence:

- Data Virtualization (e.g., Denodo, Tibco Data Virtualization): Creates a virtual layer that integrates data from disparate sources without physically moving it. This allows users to query and combine data in real-time across various systems, useful for agile data access and reducing data replication.

- Reverse ETL (e.g., Census, Hightouch): Focuses on pushing processed and transformed data from data warehouses back into operational SaaS applications (CRM, marketing automation, support tools). This enables data-driven personalization, segmentation, and operational workflows directly within the tools business users interact with daily.

The landscape is continuously evolving, with tools often incorporating features from various categories to offer more comprehensive data integration solutions.

Table 1: Comparison of Modern ETL Tool Categories| Category | Key Characteristics | Example Tools | Best Suited For |

|---|

| Cloud-Native | Serverless, auto-scaling, deep integration with cloud ecosystem. | AWS Glue, Azure Data Factory, Google Cloud Dataflow | Organizations heavily invested in a specific cloud platform. |

| Open-Source | Flexible, customizable, community-driven, often free. | Apache Airflow, Apache NiFi, Apache Spark | Companies with strong engineering teams, custom needs, cost-conscious. |

| Commercial SaaS/On-Prem | Comprehensive features, extensive connectors, enterprise support. | Talend, Informatica, Fivetran, Stitch, Matillion | Enterprises requiring robust features, compliance, and managed services. |

| Reverse ETL | Pushes processed data from DWH back to operational apps. | Census, Hightouch | Enabling data-driven operations and personalization in SaaS tools. |

Real-world Applications of Modern ETL in Industry

The strategic value of modern ETL processes is best illustrated through their diverse and impactful applications across various industries. By enabling efficient data integration, these processes empower organizations to gain deeper insights, optimize operations, enhance customer experiences, and drive innovation. Here are several practical examples and real case studies showcasing the power of modern ETL tools.

Financial Services: Fraud Detection and Risk Management

In the financial sector, the speed and accuracy of data processing are paramount for maintaining security and stability. Modern ETL tools play a critical role in aggregating vast amounts of transactional data, customer behavior patterns, and external risk indicators from disparate sources (e.g., banking systems, credit card processors, market data feeds).

- Fraud Detection: Real-time ETL pipelines ingest transaction data as it occurs, transforming and feeding it into machine learning models. For instance, a bank might use Apache Kafka and Spark Streaming to collect credit card transactions globally, apply real-time anomaly detection algorithms, and flag suspicious activities within milliseconds. This allows for immediate action, preventing financial losses and protecting customers.

- Risk Management: Financial institutions integrate data from various internal systems (loan applications, investment portfolios, trading platforms) and external sources (credit bureaus, economic indicators). Modern ETL, often using cloud-native solutions like Azure Data Factory or AWS Glue, consolidates this data into a centralized data lake or data warehouse. This unified view enables comprehensive risk assessment, regulatory reporting (e.g., Basel III, Solvency II), and stress testing, helping to identify potential exposures and make informed capital allocation decisions.

Case Study Snippet: A large investment bank implemented a modern ELT strategy using Matillion and Snowflake. They integrated data from dozens of legacy systems and market data providers, reducing data latency for risk reporting from hours to minutes, significantly improving their ability to respond to market fluctuations and regulatory audits.

Retail and E-commerce: Personalized Customer Experiences and Supply Chain Optimization

The retail and e-commerce industries thrive on understanding customer behavior and optimizing operational efficiency. Modern ETL is fundamental to achieving both.

- Personalized Customer Experiences: E-commerce platforms leverage real-time ETL to collect clickstream data, purchase history, browsing patterns, and customer demographic information. Tools like Fivetran or Stitch automate the ingestion of this data from various SaaS applications (CRM, marketing automation, loyalty programs) into a data warehouse. Once integrated and transformed, this data fuels recommendation engines, personalized marketing campaigns, and dynamic pricing strategies, leading to higher conversion rates and customer satisfaction. Reverse ETL tools can then push these personalized insights back into marketing platforms for targeted outreach.

- Supply Chain Optimization: Retailers use ETL to integrate data from inventory management systems, point-of-sale (POS) data, supplier networks, logistics partners, and external factors like weather forecasts. This comprehensive view, often built using solutions like Talend or Informatica, enables demand forecasting, optimizes stock levels, identifies potential supply chain disruptions, and streamlines order fulfillment processes, reducing costs and improving delivery times.

Case Study Snippet: A leading online retailer utilized Google Cloud Dataflow to process streaming data from website interactions and mobile app usage. This real-time pipeline allowed them to update customer profiles and product recommendations instantaneously, boosting engagement and sales by 15% within six months.

Healthcare: Patient Data Integration and Clinical Research

Healthcare organizations manage incredibly sensitive and complex data, making robust and secure ETL processes essential for patient care, operational efficiency, and medical advancement.

- Unified Patient Records: Hospitals and healthcare networks use modern ETL to integrate patient data from disparate Electronic Health Record (EHR) systems, lab results, imaging systems, billing records, and wearables. Tools like Informatica, with strong data governance and security features, are critical for ensuring HIPAA compliance while creating a holistic view of each patient. This unified data empowers clinicians with complete information for diagnosis and treatment planning, reduces medical errors, and improves care coordination.

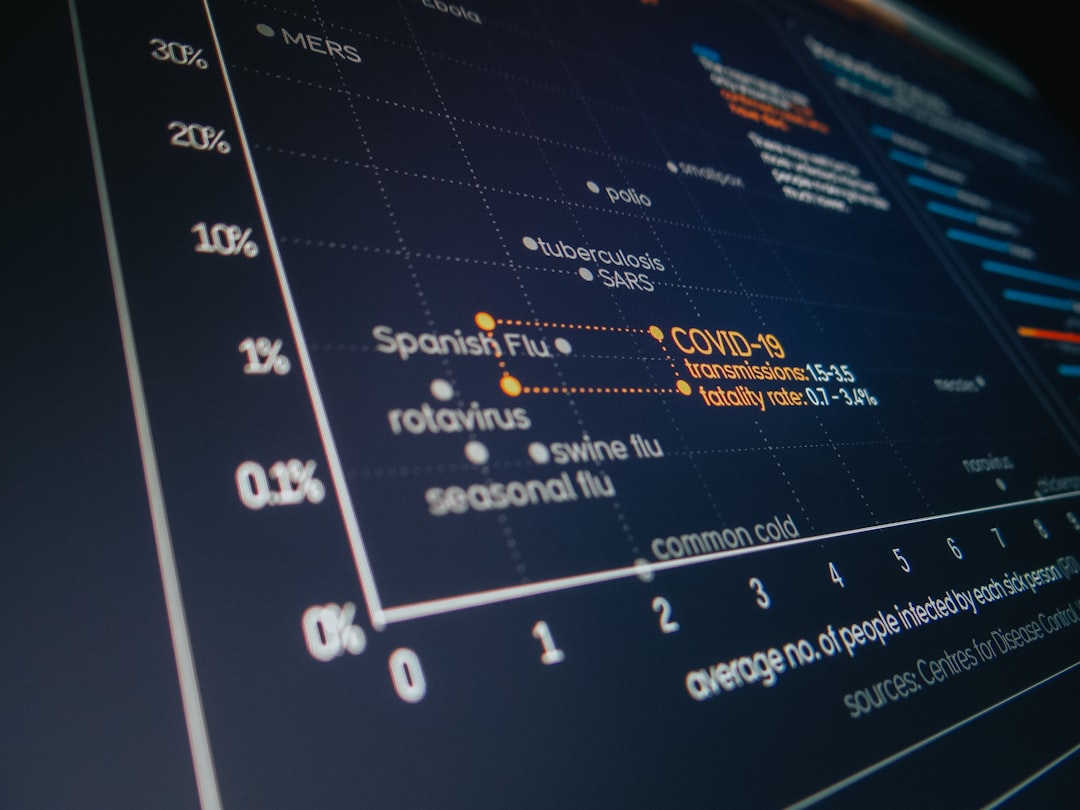

- Clinical Research and Public Health: Researchers leverage ETL to aggregate anonymized patient data, clinical trial results, genomic information, and public health datasets. Cloud ETL solutions (e.g., AWS Glue, Azure Data Factory) are often used to process these massive and diverse datasets into research data lakes. This enables faster analysis for drug discovery, disease pattern identification, and epidemiological studies, accelerating medical breakthroughs and informing public health policies.

Case Study Snippet: A major hospital system implemented an ETL pipeline using Apache Spark and Airflow to consolidate patient data from over 50 legacy systems into a secure data lake. This streamlined data access for researchers, reducing the time to prepare data for new clinical studies by 40%.

Manufacturing: IoT Data Processing and Predictive Maintenance

The manufacturing industry is increasingly leveraging IoT devices to gather operational data, and modern ETL is key to harnessing this information.

- IoT Data Processing: Factories deploy sensors on machinery, assembly lines, and products to collect vast streams of data on performance, temperature, vibration, and quality. Apache NiFi is often used at the edge or in intermediary layers to ingest, route, and perform initial transformations on this high-volume, high-velocity data before sending it to a central cloud data lake.

- Predictive Maintenance: By integrating real-time sensor data with historical maintenance records, operational parameters, and environmental conditions, modern ETL pipelines feed these datasets into machine learning models. These models can then predict equipment failures before they occur. This allows manufacturers to schedule maintenance proactively, minimize downtime, extend asset lifespan, and optimize production schedules, leading to significant cost savings and improved efficiency.

Case Study Snippet: An automotive manufacturer deployed an ETL strategy using Apache Kafka for data ingestion and Google Cloud Dataflow for real-time processing of IoT sensor data from their production lines. This enabled a predictive maintenance program that reduced unplanned downtime by 20% and saved millions in operational costs annually.

These examples underscore that modern ETL is not just a technical component but a strategic enabler, driving transformative outcomes across diverse industrial landscapes by turning raw data into a valuable, actionable asset.

ETL Strategies for Big Data and Data Lake Architectures

The proliferation of big data and the adoption of data lake architectures have profoundly influenced ETL strategies. Traditional ETL methods often falter when faced with petabyte-scale data, diverse data types, and the need for agile analytics. Modern ETL processes are specifically designed to address these challenges, enabling organizations to build robust, scalable, and flexible data pipelines for their data lakes and data warehouses.

Designing Robust Data Pipelines for Large Datasets

Building ETL pipelines for big data requires a shift in mindset and tooling. The focus moves from single-server processing to distributed computing, and from rigid schemas to schema-on-read flexibility.

- Distributed Processing Frameworks: Leveraging technologies like Apache Spark is fundamental. Modern ETL tools often utilize Spark under the hood or provide direct integration for building transformation logic. Spark\'s in-memory processing and fault tolerance make it ideal for handling large datasets efficiently.

- Parallelism and Partitioning: Designing pipelines to maximize parallelism is crucial. Data is typically partitioned across multiple nodes, and ETL operations are applied in parallel. Proper partitioning strategies (e.g., by date, customer ID, geographic region) can significantly improve query performance and reduce processing times.

- Incremental Loading and Change Data Capture (CDC): Instead of re-processing entire datasets, modern pipelines focus on incremental updates. CDC mechanisms identify and capture only the data that has changed in source systems, greatly reducing the volume of data to be processed and loaded. This is vital for maintaining up-to-date data lakes and reducing computational overhead.

- Batch and Stream Integration: Big data pipelines often combine batch processing for historical data and stream processing for real-time events. Tools like Apache Flink or Spark Streaming allow for a unified approach, processing both types of data with consistent logic and APIs.

- Data Governance and Metadata Management: With large datasets, knowing what data resides where, its lineage, and its quality becomes even more challenging. Robust metadata management and cataloging tools (e.g., AWS Glue Data Catalog, Apache Atlas) are integrated into ETL processes to provide visibility and ensure data trustworthiness.

Data Lakehouse Concepts and ETL\'s Role

The \"data lakehouse\" architecture is an emerging paradigm that combines the flexibility and low cost of data lakes with the data management and performance features of data warehouses. ETL plays a central role in realizing the data lakehouse vision.

- Schema Enforcement and Quality: While data lakes traditionally allow raw data, lakehouses introduce schema enforcement and data quality checks during the ETL process, ensuring that data moving into curated layers is reliable. This often involves using open table formats like Delta Lake, Apache Iceberg, or Apache Hudi, which provide ACID transactions, schema evolution, and time travel capabilities directly on data lake storage.

- Multi-layered Architecture: ETL in a data lakehouse typically involves moving data through several layers:

- Raw Layer: Ingesting data as-is (EL).

- Bronze/Silver Layer: Cleansing, de-duplicating, and basic transformations (T).

- Gold Layer: Aggregating, enriching, and structuring data for specific analytical use cases (T & L).

Each layer has increasing levels of refinement and governance, with ETL tools managing the transitions between them. - Unified Access: ETL processes ensure that data from all layers is accessible through a consistent interface (e.g., SQL queries via Spark or directly on the object storage), allowing different personas (data scientists, business analysts) to access data appropriate for their needs.

Handling Semi-structured and Unstructured Data

Traditional ETL struggled with anything beyond highly structured data. Modern ETL tools are built to handle the diversity of big data formats.

- Schema-on-Read: Data lakes excel at storing raw, schema-less data (JSON, XML, CSV, Parquet, ORC, Avro). Modern ETL tools can dynamically infer schemas or apply them during the read process (schema-on-read), making it easier to ingest and transform diverse data without rigid upfront schema definitions.

- Specialized Parsers and Connectors: Tools offer specialized connectors and parsers for various semi-structured formats. For example, AWS Glue can automatically crawl S3 buckets containing JSON or XML files, infer their schema, and make them queryable.

- Text Analytics and NLP Integration: For unstructured data like text documents or social media feeds, modern ETL pipelines can integrate with natural language processing (NLP) libraries or services (e.g., AWS Comprehend, Azure Text Analytics) to extract entities, sentiment, and other meaningful insights before loading the structured results into the data lake or warehouse.

- Binary Data Handling: While harder to \"transform\" in the traditional sense, ETL can manage the storage, indexing, and metadata extraction for binary data like images, audio, and video, preparing it for AI/ML models or specialized content management systems.

By embracing these strategies, organizations can effectively manage the complexities of big data, build resilient data pipelines, and unlock the full potential of their data lake and data warehouse investments.

Best Practices for Implementing Modern ETL Processes

Implementing modern ETL processes effectively goes beyond merely selecting the right tools; it requires a strategic approach, adherence to best practices, and a continuous focus on data quality, performance, and maintainability. These guidelines are crucial for building robust, scalable, and trustworthy data pipelines in 2024-2025 and beyond.

Data Quality Management and Validation

Poor data quality can render even the most sophisticated analytics useless. Integrating data quality management throughout the ETL pipeline is non-negotiable.

- Define Data Quality Rules: Establish clear rules for data accuracy, completeness, consistency, timeliness, and uniqueness before pipeline development.

- Profile Source Data: Understand the characteristics and potential issues of source data through profiling tools. This helps anticipate transformation challenges.

- Implement Validation Checks: Integrate automated validation checks at various stages of the ETL process (e.g., after extraction, after transformation). This includes checks for null values, data type mismatches, out-of-range values, duplicate records, and adherence to business rules.

- Data Cleansing and Standardization: Implement transformation logic to cleanse (e.g., remove special characters, correct misspellings) and standardize data (e.g., uniform date formats, consistent units).

- Error Logging and Reporting: Establish a robust mechanism to log data quality issues, categorize them, and report them for investigation and resolution.

- Data Governance Framework: Embed data quality within a broader data governance framework, assigning ownership and accountability for data quality across the organization.

Monitoring, Alerting, and Error Handling

Even the most meticulously designed ETL pipelines can encounter issues. Proactive monitoring and effective error handling are critical for maintaining data flow and ensuring reliability.

- Comprehensive Monitoring: Implement monitoring tools to track key metrics such as pipeline execution time, data volume processed, success/failure rates, resource utilization (CPU, memory), and data latency.

- Configurable Alerting: Set up alerts for critical events, such as pipeline failures, prolonged execution times, data quality anomalies, or significant drops in data volume. Alerts should be routed to appropriate teams (e.g., via email, Slack, PagerDuty).

- Robust Error Handling: Design pipelines with explicit error handling mechanisms. This includes:

- Graceful Failure: Prevent a single bad record from crashing the entire pipeline.

- Retry Logic: Implement automatic retries for transient errors (e.g., network issues, temporary database unavailability).

- Dead Letter Queues/Tables: Divert problematic records to a separate queue or table for later investigation without stopping the main data flow.

- Detailed Logging: Ensure comprehensive logging of errors, including context, timestamps, and affected data points.

- Observability: Beyond monitoring, strive for observability. This means having the ability to ask arbitrary questions about the state of your ETL pipelines and diagnose issues without deploying new code.

Incremental Loading and Change Data Capture (CDC)

Efficiently managing data updates is paramount for performance and resource optimization, especially with big data volumes.

- Incremental Loading: Design pipelines to process and load only new or changed data, rather than reloading entire datasets. This reduces processing time, network bandwidth, and storage costs.

- Change Data Capture (CDC): Implement CDC techniques at the source system level to identify and extract only the data modifications (inserts, updates, deletes) since the last load. Common CDC methods include:

- Timestamp-based: Using a \'last_updated\' column.

- Log-based: Reading database transaction logs (more robust).

- Trigger-based: Using database triggers (can impact source system performance).

- Versioning Data: For historical analysis, consider techniques like slowly changing dimensions (SCD Type 2) or appending changes to create a full history of records in the data warehouse/lake.

Cost Optimization in Cloud ETL

While cloud ETL solutions offer immense scalability, managing costs effectively is crucial, given their pay-as-you-go nature.

- Right-Sizing Resources: Continuously monitor resource utilization and adjust compute and memory allocations for ETL jobs to match actual needs, avoiding over-provisioning. Cloud-native solutions often offer auto-scaling, but manual tuning might still be necessary for specific workloads.

- Serverless ETL: Prioritize serverless ETL services (like AWS Glue, Azure Data Factory, Google Cloud Dataflow) where possible, as you only pay for the actual compute time consumed, eliminating idle resource costs.

- Optimized Storage: Store intermediate and final data in cost-effective storage tiers (e.g., Amazon S3 Glacier, Azure Blob Storage Cool/Archive) when immediate access is not required. Use optimized file formats (Parquet, ORC) to reduce storage footprint and I/O operations.

- Scheduled Shut-downs: For batch ETL jobs running on dedicated clusters (e.g., Spark clusters), ensure they are automatically shut down or scaled to zero after completion.

- Data Transfer Costs: Be mindful of data transfer costs, especially across regions or availability zones. Design pipelines to minimize unnecessary data movement.

- Monitoring and Cost Allocation: Use cloud cost management tools to track ETL spending, identify cost drivers, and allocate costs to specific projects or departments for better accountability.

By integrating these best practices, organizations can build modern ETL processes that are not only powerful and efficient but also reliable, compliant, and cost-effective, forming a strong foundation for their data strategy.

The Future Landscape of ETL: AI, ML, and Data Mesh

The field of ETL is in a constant state of evolution, driven by advancements in artificial intelligence, machine learning, and emerging architectural paradigms like Data Mesh. These forces are poised to further automate, intellectualize, and decentralize data integration, making ETL even more intelligent, agile, and accessible.

AI/ML-Powered ETL for Automated Data Discovery and Transformation

Artificial intelligence and machine learning are increasingly being woven into ETL tools, transforming how data is discovered, prepared, and transformed. This shift promises to reduce manual effort, enhance accuracy, and accelerate time-to-insight.

- Automated Data Discovery and Profiling: AI algorithms can automatically scan diverse data sources, infer schemas, identify data types, and detect patterns or anomalies. This reduces the manual effort required for data cataloging and understanding new datasets. Machine learning can even suggest optimal data types or identify potential primary keys.

- Intelligent Data Cleansing and Matching: ML models can learn from historical data cleaning efforts to suggest or automatically apply corrections for common data quality issues (e.g., address standardization, duplicate record identification, fuzzy matching). This moves beyond rule-based cleansing to more adaptive and intelligent data quality management.

- Automated Transformation Recommendations: AI can analyze data usage patterns and business requirements to recommend optimal transformation logic. For example, it might suggest aggregations, joins, or feature engineering steps commonly used by data scientists for specific analytical tasks, accelerating the preparation of data for machine learning models.

- Predictive Maintenance for Pipelines: ML can be used to predict potential ETL pipeline failures by analyzing historical logs, resource utilization, and data flow patterns, allowing for proactive intervention before an outage occurs.

The goal of AI/ML-powered ETL is to make data integration more self-service, less dependent on specialized data engineers for routine tasks, and more adaptive to changing data landscapes.

Data Mesh Principles and Decentralized ETL

The Data Mesh is an emerging socio-technical paradigm that addresses the challenges of centralized data platforms in large, complex organizations. It proposes treating data as a product, owned and served by decentralized domain-oriented teams. This has significant implications for ETL:

- Domain-Oriented Data Ownership: Instead of a central data team managing all ETL, domain teams (e.g., marketing, sales, finance) become responsible for their own data products, including the ETL processes that prepare and expose that data.

- Self-Serve Data Infrastructure: A foundational layer of self-serve data infrastructure empowers domain teams with standardized tools and platforms to build and manage their own ETL pipelines without heavy reliance on a central team. This includes standardized APIs, data cataloging, and monitoring tools.

- Data as a Product: Data products, exposed via well-defined interfaces (e.g., APIs, streamed events, curated datasets), become the output of these decentralized ETL efforts. The focus shifts from raw data ingestion to delivering high-quality, discoverable, addressable, trustworthy, and secure data products.

- Federated Governance: While ETL becomes decentralized, a federated governance model ensures interoperability and adherence to global standards across domains, facilitating consistent data consumption.

In a Data Mesh architecture, modern ETL tools would need to support these decentralized operations, providing flexible deployment options, robust API integration, and strong metadata management to ensure discoverability and governance across domains.

The Convergence of Data Integration and Data Observability

As data pipelines become more complex and critical, the line between data integration and data observability is blurring. Data observability is about understanding the health and state of data systems, similar to how DevOps applies observability to applications.

- End-to-End Visibility: Future ETL solutions will deeply integrate observability features, providing real-time insights into data quality, schema changes, pipeline performance, data lineage, and data freshness across the entire data journey.

- Proactive Anomaly Detection: Beyond simple error alerting, advanced observability will use ML to detect subtle anomalies in data volume, schema drift, or data content that might indicate underlying issues before they impact downstream consumers.

- Automated Root Cause Analysis: When issues arise, observability tools will help pinpoint the exact source of the problem, whether it\'s a source system change, a transformation error, or a data quality issue, significantly reducing mean time to resolution.

- Impact Analysis: Understanding the downstream impact of a data quality issue or a pipeline failure will become automated, allowing data teams to prioritize fixes and communicate effectively with affected stakeholders.

The future of modern ETL is one where data pipelines are not just efficient at moving and transforming data but are also intelligent, self-healing, and transparent, ensuring that organizations can truly trust and leverage their most valuable asset: data.

Table 2: Key Future Trends in ETL| Trend | Impact on ETL | Example Technologies/Concepts |

|---|

| AI/ML-Powered ETL | Automated data discovery, quality, and transformation suggestions; reduced manual effort. | ML-driven data profiling, intelligent data cleansing, transformation recommendations. |

| Data Mesh | Decentralized data ownership and ETL, data as a product, self-serve infrastructure. | Domain-oriented teams, self-serve platforms, federated governance. |

| Data Observability | End-to-end visibility, proactive anomaly detection, automated root cause analysis. | Real-time monitoring of data quality, schema drift, pipeline health, data lineage. |

| Serverless Everything | Further abstraction of infrastructure, pay-per-use, simplified operations. | Serverless compute for data pipelines, functions as a service for micro-transformations. |

Frequently Asked Questions (FAQ)

What is the primary difference between ETL and ELT?

Traditionally, ETL (Extract, Transform, Load) involved extracting data from sources, transforming it in a staging area, and then loading the cleaned data into a data warehouse. ELT (Extract, Load, Transform), which gained prominence with cloud data warehouses and data lakes, first extracts data and loads it directly into the target system (often raw), performing transformations within the powerful compute environment of the data warehouse or data lake itself. ELT offers greater flexibility, faster initial loading, and preserves raw data for multiple uses.

Why are cloud ETL solutions preferred by many organizations today?

Cloud ETL solutions are preferred due to their inherent scalability, elasticity, and cost-effectiveness. They offer serverless architectures, meaning users only pay for the compute resources consumed during job execution, eliminating the need for expensive upfront infrastructure investments. They also integrate seamlessly with other cloud services like data lakes and data warehouses, provide extensive managed connectors, and simplify maintenance by abstracting away infrastructure management.

How does real-time ETL benefit businesses?

Real-time ETL provides immediate insights by processing data as it\'s generated, minimizing latency between an event and its analysis. This capability is crucial for use cases like fraud detection, personalized customer experiences (e.g., real-time recommendations), dynamic pricing, IoT monitoring, and immediate operational adjustments. It empowers businesses to react to opportunities and threats instantaneously, driving competitive advantage and improved decision-making.

What role does data governance play in modern ETL processes?

Data governance is fundamental in modern ETL. It ensures that data is high quality, secure, compliant with regulations (e.g., GDPR, HIPAA), and trustworthy throughout its lifecycle. Modern ETL tools incorporate features like data masking, access control, lineage tracking, and automated quality checks to enforce governance policies. This ensures that the data consumed by analytics and AI applications is reliable and meets organizational standards.

Can ETL processes be fully automated?

While full, end-to-end automation of ETL is an aspirational goal, modern tools have achieved significant levels of automation. Features like automated schema discovery, change data capture (CDC), workflow orchestration (e.g., Apache Airflow), and AI-driven data quality checks greatly reduce manual effort. However, human oversight is still often required for complex transformation logic, error resolution, and adapting to evolving business requirements or data sources. The trend is towards increasingly intelligent automation, reducing the need for constant manual intervention.

What are the common challenges in implementing modern ETL?

Common challenges include managing data quality from diverse sources, handling schema evolution as source systems change, ensuring pipeline scalability for growing data volumes, optimizing costs in cloud environments, securing sensitive data during transfer and transformation, and managing the complexity of real-time data integration. Additionally, bridging the skill gap for data engineers proficient in modern distributed computing frameworks can be a significant hurdle.

Conclusion and Recommendations

The journey of data integration has dramatically evolved, transforming from rigid, batch-oriented processes into dynamic, scalable, and intelligent modern ETL. In an accelerating digital landscape where data is the new currency, effective ETL is no longer a mere technical requirement but a strategic imperative. The tools and methodologies available in 2024-2025 empower organizations to not only keep pace with the explosion of data but to proactively harness its potential, turning raw information into profound business insights.

Modern ETL processes, characterized by their cloud-native architectures, real-time capabilities, robust automation, and embedded data governance, are the foundational layers upon which data-driven strategies are built. From optimizing financial fraud detection and personalizing e-commerce experiences to advancing healthcare research and enabling predictive maintenance in manufacturing, the applications are as diverse as they are impactful. By leveraging leading commercial platforms, flexible open-source tools, or integrated cloud solutions, businesses can construct resilient data pipelines that feed high-quality, timely data to analytics platforms, machine learning models, and operational systems.

Looking ahead, the convergence of AI/ML, the principles of Data Mesh, and advanced data observability promises an even more intelligent and autonomous future for ETL. Data pipelines will become self-aware, self-optimizing, and self-healing, further democratizing data access and accelerating the journey from data to wisdom. To thrive in this evolving landscape, organizations must embrace these modern paradigms. Investing in the right tools, fostering a culture of data quality, adopting agile development practices, and continuously upskilling data teams are crucial steps. By doing so, businesses can ensure their data strategies remain robust, competitive, and truly transformative, driving innovation and sustainable growth in the digital age.

Site Name: Hulul Academy for Student Services

Email: info@hululedu.com

Website: hululedu.com